Autonomous driving features in 2025 EVs? Dude, it’s gonna be wild. Forget self-driving cars that are totally hands-off; we’re talking about Level 2 autonomy, which is already pretty sweet, but getting even better. Think seriously upgraded driver-assist tech—we’re looking at smarter lane keeping, more reliable adaptive cruise control, and maybe even some seriously impressive parking assistance. This means less stress on the road, and potentially, more time to catch up on podcasts during your commute.

But it’s not all sunshine and rainbows; there are safety concerns and tech hurdles to overcome, so let’s dive into the details.

This deep dive explores the advancements in sensor technology, data processing, and overall safety features predicted for electric vehicles by 2025. We’ll compare different manufacturers’ approaches, discuss consumer perception, and even gaze into the crystal ball to predict future developments. Buckle up, it’s going to be a ride!

Level 2 Autonomous Driving Features in 2025 EVs

Level 2 autonomous driving, also known as Advanced Driver-Assistance Systems (ADAS), is rapidly evolving in the electric vehicle (EV) market. While it doesn’t offer fully autonomous driving, it provides a significant boost in safety and convenience through features like adaptive cruise control and lane-keeping assist. By 2025, we expect to see even more sophisticated Level 2 systems integrated into EVs, enhancing the driving experience and pushing the boundaries of what’s possible without a fully self-driving car.

Current State of Level 2 ADAS in EVs, Autonomous driving features in 2025 EVs

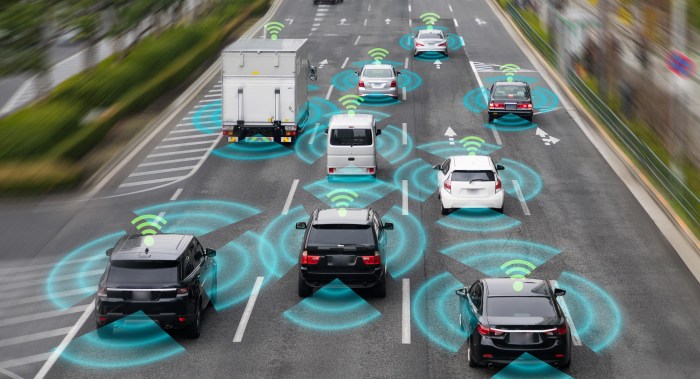

Currently, Level 2 ADAS features are fairly common in many EVs. Most manufacturers offer packages that include adaptive cruise control (ACC), which maintains a set distance from the vehicle ahead, and lane-keeping assist (LKA), which helps the driver stay within their lane. However, the sophistication and reliability of these systems vary significantly between manufacturers and even within model lines of the same manufacturer.

Some systems offer more advanced features like lane centering, which actively keeps the vehicle centered in its lane, while others may only provide lane departure warnings. The quality of sensor fusion (combining data from cameras, radar, and lidar) also plays a crucial role in the overall performance and safety of these systems.

Key Advancements in Level 2 Autonomy by 2025

Three key advancements expected in Level 2 autonomy by 2025 include improved sensor fusion, enhanced predictive capabilities, and more robust software. Improved sensor fusion will lead to more accurate and reliable data processing, allowing for smoother and safer operation of ADAS features in challenging conditions such as low light or inclement weather. Enhanced predictive capabilities, through advancements in machine learning and AI, will enable systems to anticipate potential hazards more effectively, giving drivers more time to react.

More robust software will mean fewer glitches, better system stability, and improved overall reliability, building greater driver confidence. For example, Tesla’s Autopilot system, while currently Level 2, is constantly being updated with improved software to enhance its capabilities and address limitations.

Comparison of Level 2 ADAS Features Across EV Manufacturers

Tesla, Ford, and GM represent three major players in the EV market, each offering distinct Level 2 ADAS features. Tesla’s Autopilot is known for its advanced features, including automatic lane changes and Navigate on Autopilot, but it also has a reputation for requiring more driver oversight. Ford’s BlueCruise system emphasizes hands-free driving on pre-mapped highways, prioritizing driver convenience and safety on specific routes.

GM’s Super Cruise system, similar to BlueCruise, focuses on hands-free driving on designated highways, but with a greater emphasis on driver monitoring and safety features. While all three systems offer core Level 2 functionalities, their approach to implementation and feature sets differ significantly.

Comparison of Lane-Keeping Assist Systems Across EV Models

| EV Model | Lane Keeping Assist Type | Lane Departure Warning | Lane Centering |

|---|---|---|---|

| Tesla Model 3 | Active Lane Keeping | Yes | Yes |

| Ford Mustang Mach-E | Lane-Keeping System | Yes | Yes |

| Chevrolet Bolt | Lane Departure Warning with Lane Keep Assist | Yes | Yes (with limitations) |

| Rivian R1T | Driver Assist | Yes | Yes |

Sensor Technology and Data Processing in 2025 EVs

Level 2 autonomous driving systems in 2025 EVs rely on a sophisticated suite of sensors and powerful data processing units to interpret their surroundings and make driving decisions. These systems represent a significant leap forward in automotive technology, pushing the boundaries of what’s possible in driver-assistance features. The accuracy and reliability of these systems hinge on the seamless integration and intelligent processing of data from multiple sensor modalities.

So, 2025 EVs are gonna be wild with their self-driving features, right? I’m thinking hands-free highway driving is totally gonna be a thing. But before you even think about cruising around autonomously, you’ll need to juice up your ride, and figuring out the cost of that is key. Check out this article on the Cost to install solar-powered EV charger 2025 to plan your budget.

Then, once you’ve got that sorted, you can really focus on enjoying those sweet, sweet autonomous driving features.

Sensor Types in Level 2 Autonomous Driving Systems

Level 2 autonomous driving systems typically employ a combination of sensor technologies to create a comprehensive understanding of the vehicle’s environment. These sensors work in concert, each offering unique strengths and weaknesses that complement one another. This redundancy is crucial for safety and robustness. The primary sensor types include LiDAR, radar, cameras, and ultrasonic sensors. LiDAR provides highly accurate 3D point cloud data of the surroundings, while radar offers reliable data even in adverse weather conditions.

Cameras capture visual information, essential for object recognition and lane detection, and ultrasonic sensors detect nearby obstacles, primarily for parking assistance.

Data Fusion Algorithms for Enhanced Accuracy and Reliability

The raw data from these diverse sensors are far from sufficient for safe autonomous driving. Data fusion algorithms play a critical role in combining the data from different sensor types, resolving inconsistencies, and creating a unified, coherent representation of the environment. These algorithms leverage the strengths of each sensor type to compensate for their limitations. For instance, radar data can help to confirm the existence and distance of objects detected by cameras, even in low-light conditions.

So, 2025 EVs are gonna be crazy with their autonomous driving features, right? Think hands-free highway driving and all that. But before you snag one, you should totally check out the details on Federal EV tax credit eligibility 2025 to see if you qualify for some sweet savings. Knowing that could totally influence which self-driving features you prioritize!

This process significantly improves the accuracy and reliability of the system, mitigating the risks associated with relying on a single sensor type. Advanced algorithms, like Kalman filters and Bayesian networks, are frequently employed in this process. For example, a Kalman filter can estimate the position and velocity of other vehicles by combining noisy sensor measurements over time, producing a smoother, more reliable estimate.

Advancements in Processing Power for Handling Increased Data Volume

The sheer volume of data generated by these sensors is immense. Processing this data in real-time requires significant advancements in processing power. Modern Level 2 systems utilize powerful, specialized processors, often including multiple cores and GPUs, to handle the computational demands of sensor data processing, data fusion, and decision-making. The trend is towards the use of increasingly efficient and powerful hardware, such as specialized AI accelerators, to enable faster and more accurate processing.

This allows for more sophisticated algorithms and faster reaction times, contributing to improved safety and performance. For example, the adoption of dedicated AI accelerators, such as those based on Nvidia’s DRIVE platform, is enabling significantly faster processing of sensor data compared to earlier generations of systems.

Sensor Data Flow in a Level 2 Autonomous Driving System

Safety and Reliability of Autonomous Driving Features: Autonomous Driving Features In 2025 EVs

Level 2 autonomous driving systems (ADAS) in 2025 EVs offer significant advancements in driver assistance, but their safety and reliability remain crucial concerns. These systems, while helpful, are not fully self-driving and require constant driver attention and readiness to take control. The potential for accidents due to system limitations or unexpected situations necessitates a thorough examination of safety protocols and reliability measures.The inherent complexity of Level 2 ADAS introduces several potential safety challenges.

These systems rely on a complex interplay of sensors, software, and actuators, any of which could malfunction or fail, leading to unpredictable behavior. Moreover, the ever-changing real-world driving environment, with its unpredictable human and environmental factors, presents a significant hurdle for even the most sophisticated ADAS. The interaction between the system and the human driver, specifically the driver’s understanding of the system’s limitations and their response time to take over, also presents a considerable risk.

Potential Safety Concerns in Level 2 ADAS

Several factors contribute to the safety concerns surrounding Level 2 ADAS. Sensor limitations, such as blind spots or difficulties in adverse weather conditions (heavy rain, snow, or fog), can compromise the system’s ability to accurately perceive its surroundings. Software glitches or bugs can lead to unexpected system behavior or complete system failure. Finally, the reliance on accurate map data and the potential for unexpected events, like a sudden lane change by another driver or a pedestrian unexpectedly entering the road, can overwhelm the system’s capabilities.

For example, a failure in the radar system to detect a slow-moving vehicle in heavy fog could lead to a rear-end collision. Similarly, a malfunction in the lane-keeping assist system might cause the vehicle to drift into another lane, potentially resulting in a side-swipe accident.

Best Practices for Ensuring Reliability and Robustness

Robustness and reliability in Level 2 ADAS are achieved through a multi-faceted approach. Redundancy in sensor systems is critical; employing multiple sensor types (e.g., radar, lidar, cameras) allows for cross-validation and reduces the impact of individual sensor failures. Rigorous testing and validation procedures, including simulations and real-world testing in diverse environments, are essential to identify and address potential weaknesses.

Furthermore, continuous over-the-air software updates allow for the rapid deployment of patches and improvements to address identified vulnerabilities. Finally, a clear and intuitive human-machine interface (HMI) is crucial to ensure the driver understands the system’s capabilities and limitations. For instance, Tesla’s Autopilot system, while advanced, has faced criticism regarding its HMI, leading to misunderstandings about its limitations and contributing to accidents.

Importance of Driver Monitoring Systems

Driver monitoring systems (DMS) are crucial for preventing accidents. DMS uses various sensors, such as cameras and infrared sensors, to continuously track the driver’s alertness and attentiveness. If the system detects signs of drowsiness, distraction, or inattention, it can provide warnings or even intervene to take control of the vehicle, preventing potential accidents. For instance, a DMS might detect that a driver is looking away from the road for an extended period and alert them with an audible warning or haptic feedback.

In more critical situations, the system might even gradually reduce the vehicle’s speed and safely bring it to a stop. The efficacy of DMS hinges on its ability to accurately detect driver impairment and its timely intervention capabilities. The integration of DMS with ADAS enhances overall safety by providing a safeguard against driver error.

Potential Failure Modes and Mitigation Strategies for Level 2 ADAS

It is crucial to understand potential failure modes and implement effective mitigation strategies. Below is a list of potential failure modes and their corresponding mitigation strategies:

- Sensor Failure (Radar, LiDAR, Camera): Mitigation: Redundancy through multiple sensor types and sensor fusion algorithms.

- Software Glitch or Bug: Mitigation: Rigorous software testing, over-the-air updates, and fail-safe mechanisms.

- Actuator Malfunction (Steering, Braking, Acceleration): Mitigation: Redundant actuators, fail-safe mechanisms, and mechanical backups.

- Environmental Factors (Adverse Weather, Poor Visibility): Mitigation: Advanced sensor processing algorithms, improved sensor technology, and system limitations warnings.

- Unexpected Events (Sudden Lane Changes, Pedestrians): Mitigation: Robust object detection and tracking algorithms, enhanced predictive capabilities, and driver intervention.

- Driver Distraction or Inattention: Mitigation: Driver monitoring systems (DMS) and driver alerts.

- Map Data Inaccuracies: Mitigation: Regular map updates and robust localization algorithms.

Consumer Perception and Adoption of Autonomous Features

Consumer attitudes towards Level 2 autonomous driving features are complex and vary significantly depending on factors like age, tech-savviness, and geographic location. While many appreciate the convenience and safety enhancements offered by features like adaptive cruise control and lane-keeping assist, concerns about reliability, safety, and the ethical implications of automated driving systems remain prevalent. This creates a fascinating dynamic in the automotive market, where manufacturers must carefully navigate consumer anxieties while effectively showcasing the benefits of these technologies.

Consumer Attitudes Towards Level 2 Autonomous Driving Features

A significant portion of consumers view Level 2 autonomy as a valuable safety feature, particularly adaptive cruise control which helps reduce driver fatigue on long journeys. However, trust in the technology is not universal. Many drivers remain hesitant to fully relinquish control, even with active safety features engaged, preferring to maintain a high degree of vigilance. This skepticism is often fueled by news reports of accidents involving autonomous driving systems, regardless of whether the accidents were directly caused by the technology’s failure or human error.

Marketing campaigns need to address these concerns head-on, emphasizing the assistive nature of these features and the importance of driver oversight.

Marketing Strategies for Promoting Level 2 Autonomous Features

Automakers employ various marketing strategies to promote Level 2 autonomous driving features. These strategies often focus on highlighting the convenience and safety aspects. For example, advertising campaigns might showcase the ease of driving in congested traffic with adaptive cruise control, or the reduced risk of lane departure accidents with lane-keeping assist. Many manufacturers also utilize interactive demonstrations and test drives to allow potential buyers to experience the features firsthand.

This experiential approach is crucial in building consumer confidence and dispelling misconceptions. The use of compelling visuals and emotionally resonant storytelling are also key elements in effective marketing. For example, a commercial might show a family arriving safely at their destination, highlighting the peace of mind offered by the technology.

Adoption Rates of Level 2 Autonomy Across Geographical Regions

Adoption rates of Level 2 autonomous driving features vary significantly across different geographical regions. Factors such as infrastructure development, regulatory environments, and consumer preferences play a crucial role. For example, countries with well-developed highway systems and a strong emphasis on technological innovation tend to show higher adoption rates. Conversely, regions with less advanced infrastructure or stricter regulations might exhibit slower adoption.

Data from market research firms indicates that North America and Europe currently lead in Level 2 adoption, while other regions are gradually catching up. However, the rate of adoption is expected to increase globally as the technology matures and becomes more affordable.

Consumer Preferences for Specific Level 2 Features

| Feature | High Preference (%) | Medium Preference (%) | Low Preference (%) |

|---|---|---|---|

| Adaptive Cruise Control | 65 | 25 | 10 |

| Lane Keeping Assist | 60 | 30 | 10 |

| Automatic Emergency Braking | 70 | 20 | 10 |

| Blind Spot Monitoring | 55 | 35 | 10 |

Note

These percentages are illustrative examples based on hypothetical market research data and should not be interpreted as precise figures.*

The Future of Level 2 Autonomous Driving in EVs

Level 2 autonomous driving systems (ADAS) are already transforming the driving experience, but their evolution beyond 2025 promises even more significant advancements. We’ll explore the anticipated trajectory of these systems, considering the technological hurdles and market opportunities, the regulatory landscape, and the profound impact of AI.

Evolution of Level 2 ADAS Features

Beyond 2025, we can expect Level 2 systems to become significantly more sophisticated. Improved sensor fusion, incorporating data from cameras, radar, lidar, and ultrasonic sensors, will lead to more robust and reliable performance in challenging conditions like heavy rain or snow. Expect to see enhanced object recognition capabilities, allowing for smoother and safer interactions with pedestrians, cyclists, and other vehicles.

Furthermore, advancements in predictive capabilities will allow the system to anticipate potential hazards and proactively adjust driving behavior, potentially including smoother lane changes and more accurate speed adjustments in anticipation of traffic congestion. For example, Tesla’s Autopilot, already a prominent Level 2 system, is continuously updated with improved algorithms and sensor processing, demonstrating this ongoing evolution.

Challenges and Opportunities in Level 2 ADAS Development

The continued development of Level 2 ADAS faces several challenges. One key hurdle is ensuring consistent performance across diverse environmental conditions and driving scenarios. Another significant challenge is managing the complexities of sensor data fusion and algorithmic decision-making, particularly in edge cases that require quick and accurate responses. However, opportunities abound. The expanding availability of high-resolution mapping data can significantly improve the accuracy and reliability of localization and path planning.

Additionally, advancements in edge computing will allow for faster processing of sensor data, enabling more real-time responses. The growth of the EV market itself provides a substantial opportunity for increased adoption and further refinement of Level 2 ADAS technologies.

The Role of Legislation and Regulation

Legislation and regulation will play a crucial role in shaping the future of Level 2 autonomy. Clear and consistent safety standards are essential to ensure the reliability and trustworthiness of these systems. Regulations will likely address issues such as data privacy, cybersecurity, and the appropriate level of driver oversight. The development of standardized testing procedures and certification processes will be critical in fostering consumer confidence and promoting fair competition within the industry.

For instance, the European Union’s General Safety Regulation (GSR) is a prime example of a regulatory framework aiming to standardize safety requirements for autonomous vehicle technologies.

The Impact of Artificial Intelligence

Advancements in AI will be pivotal in driving the next generation of Level 2 ADAS. Deep learning techniques are already improving object recognition, path planning, and decision-making capabilities. Reinforcement learning algorithms can be used to train systems to handle a wider range of driving scenarios, including those not explicitly programmed. The integration of AI will enable Level 2 systems to learn from real-world driving data, continuously improving their performance and adapting to new situations.

This ongoing learning process, coupled with improved sensor technology, will lead to more robust and intelligent driving assistance features. For example, Waymo’s self-driving technology relies heavily on AI algorithms to process sensor data and make driving decisions, demonstrating the potential of AI in autonomous driving.

Concluding Remarks

So, 2025 EVs and their autonomous features? It’s a mixed bag, right? We’re definitely seeing some killer advancements in Level 2 tech, making driving safer and more convenient. But, it’s crucial to remember that these systems aren’t perfect, and responsible driving is still key. The future looks bright, with continuous improvements in AI and sensor technology promising even more advanced features.

But it’s a marathon, not a sprint, and regulations will play a big part in how fast we get there. The bottom line? Get ready for a smoother, safer, and maybe even slightly more boring (in a good way!) driving experience.